Trendaavat aiheet

#

Bonk Eco continues to show strength amid $USELESS rally

#

Pump.fun to raise $1B token sale, traders speculating on airdrop

#

Boop.Fun leading the way with a new launchpad on Solana.

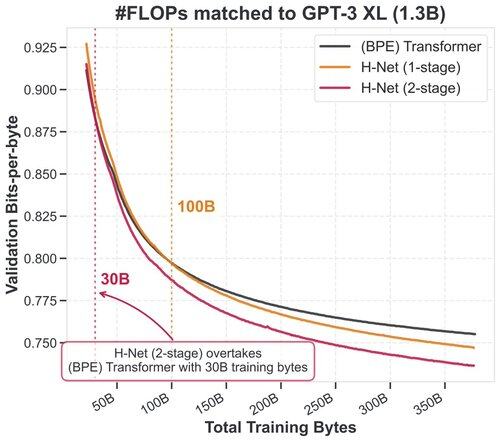

Tokenization is just a special case of "chunking" - building low-level data into high-level abstractions - which is in turn fundamental to intelligence.

Our new architecture, which enables hierarchical *dynamic chunking*, is not only tokenizer-free, but simply scales better.

12.7. klo 00.06

Tokenization has been the final barrier to truly end-to-end language models.

We developed the H-Net: a hierarchical network that replaces tokenization with a dynamic chunking process directly inside the model, automatically discovering and operating over meaningful units of data

This was an incredibly important project to me - I’ve wanted to solve it for years, but had no idea how. This was all @sukjun_hwang and @fluorane's amazing work!

I wrote about the story of its development, and what might be coming next.

The H-Net:

194,69K

Johtavat

Rankkaus

Suosikit